Evaluating Amazon's Mechanical Turk as a tool for experimental behavioral research

Abstract

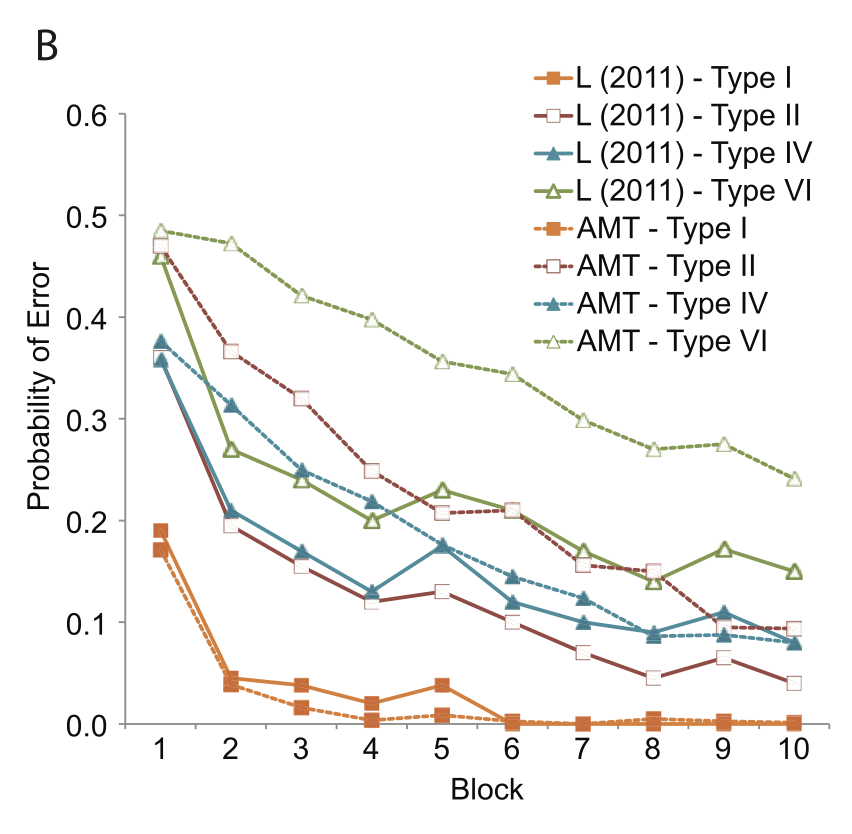

Amazon Mechanical Turk (AMT) is an online crowdsourcing service where anonymous online workers complete web-based tasks for small sums of money. The service has attracted attention from experimental psychologists interested in gathering human subject data more efficiently. However, relative to traditional laboratory studies, many aspects of the testing environment are not under the experimenter's control. In this paper, we attempt to empirically evaluate the fidelity of the AMT system for use in cognitive behavioral experiments. These types of experiment differ from simple surveys in that they require multiple trials, sustained attention from participants, and millisecond accuracy for response recording and stimulus presentation. We replicate a diverse body of tasks from experimental psychology including the Stroop, Switching, Flanker, Simon, Posner Cuing, attentional blink, subliminal priming, and category learning tasks using participants recruited using AMT. While most of replications were qualitatively successful and validated the approach of collecting data anonymously online using a web-browser, for others the alignment between laboratory results and online results showed more of a disparity. A number of important lessons were encountered in the process of conducting these replications that should be of value to other researchers.

Highlighted Figures

Keywords

Bibtex entry:

@article{crump2013evaluating,

abstract = {Amazon Mechanical Turk (AMT) is an online crowdsourcing service where anonymous online workers complete web-based tasks for small sums of money. The service has attracted attention from experimental psychologists interested in gathering human subject data more efficiently. However, relative to traditional laboratory studies, many aspects of the testing environment are not under the experimenter's control. In this paper, we attempt to empirically evaluate the fidelity of the AMT system for use in cognitive behavioral experiments. These types of experiment differ from simple surveys in that they require multiple trials, sustained attention from participants, and millisecond accuracy for response recording and stimulus presentation. We replicate a diverse body of tasks from experimental psychology including the Stroop, Switching, Flanker, Simon, Posner Cuing, attentional blink, subliminal priming, and category learning tasks using participants recruited using AMT. While most of replications were qualitatively successful and validated the approach of collecting data anonymously online using a web-browser, for others the alignment between laboratory results and online results showed more of a disparity. A number of important lessons were encountered in the process of conducting these replications that should be of value to other researchers.},

author = {Crump, Matthew JC and McDonnell, John V and Gureckis, T.M.},

journal = {PloS ONE},

number = {3},

pages = {e57410},

publisher = {Public Library of Science},

title = {Evaluating Amazon's Mechanical Turk as a tool for experimental behavioral research},

volume = {8},

year = {2013}}QR Code:

Download SVG